Two years ago, the question was “can AI agents work?” Today, the question is different: “can AI agents work reliably, consistently, and at scale?”

That shift created an entirely new engineering discipline. If you’ve heard the term “harness engineering” but aren’t sure what it means or why senior engineers are building careers around it, this guide is your starting point.

We’ll cover what harness engineering actually is, where the term comes from, the three core pillars that define the discipline, and what you need to learn to get started. No prerequisites required beyond basic technical curiosity.

Interactive Concept Map

Click any node to expand or collapse. Use the controls to zoom, fit to view, or go fullscreen.

Where the Term Comes From

The word “harness” in this context borrows from horse tack: the reins, saddle, bit, and bridle that together allow a rider to channel a powerful animal in a specific direction. The horse provides the raw power. The harness provides the control.

In AI systems, the “horse” is the large language model. GPT-4, Claude, Gemini, Llama: these models are capable, fast, and increasingly powerful. But on their own, they don’t know where to go. They hallucinate. They lose track of long tasks. They call the wrong tools at the wrong time. They generate code that compiles but doesn’t work.

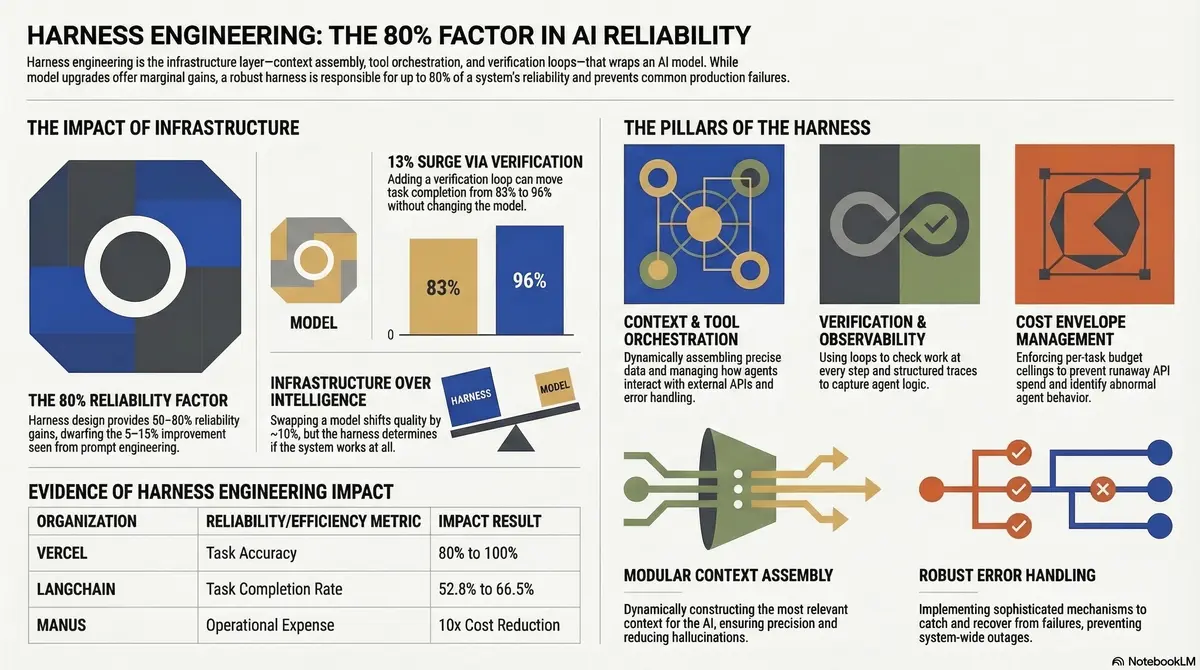

Harness engineering is the discipline of building the infrastructure that wraps around these models to make them reliable. The harness doesn’t replace the model’s intelligence. It channels it.

OpenAI’s engineering team coined the term in early 2026 when they revealed that their internal product, built with over one million lines of code, had zero human-written lines. The engineers didn’t write code. They designed the system that let AI agents write code reliably. That system is the harness.

The Definition

Harness engineering is the practice of designing environments, constraints, feedback loops, and infrastructure that make AI agents reliable at scale.

A harness engineer doesn’t build the AI model. They don’t fine-tune it or train it. Instead, they build everything around the model: the context it receives, the tools it can access, the guardrails that prevent mistakes, the verification systems that catch errors, and the recovery mechanisms that handle failures.

If a software engineer builds applications, and an ML engineer builds models, a harness engineer builds the bridge between models and real-world reliability.

Think of it through a computer science analogy: the model is the CPU, the context window is RAM, the harness is the operating system, and the agent is the application. You wouldn’t run software directly on a CPU without an operating system to manage memory, schedule processes, and handle I/O. Similarly, you wouldn’t deploy an AI agent without a harness to manage context, coordinate tools, and handle failures.

Why Harness Engineering Matters Now

Three convergent trends made harness engineering necessary in 2026.

Models became commoditized. Claude, GPT-4, Gemini, and open-source alternatives perform within a narrow band of each other on standard benchmarks. The model is no longer the competitive advantage. The system around the model determines whether an agent succeeds or fails in production.

Agents moved from demos to production. In 2025, most agent deployments were demos, proofs of concept, or tightly controlled internal tools. In 2026, organizations are deploying agents that handle customer interactions, write production code, manage infrastructure, and make financial decisions. The reliability bar went from “impressive demo” to “can’t go down.”

Benchmarks stopped measuring what matters. Standard benchmarks measure single-turn task completion. But production agents run for hours, sometimes days. They execute hundreds of steps. They encounter API timeouts, rate limits, context window exhaustion, and tool failures. A one-percent benchmark improvement means nothing if the agent drifts off-track after fifty steps.

These three shifts created demand for engineers who specialize not in building models, but in building the systems that make models work reliably. That’s harness engineering.

The Three Pillars

Harness engineering rests on three interconnected pillars. Understanding these gives you the conceptual framework for everything else in the discipline.

Pillar 1: Context Engineering

Context engineering is the practice of managing what information an AI agent can access, when it accesses it, and how that information is structured.

Every AI model has a limited context window. Even models with 200,000-token windows face practical limits when agents run multi-hour tasks. The context fills up. Information gets pushed out. The agent “forgets” earlier decisions.

Context engineering solves this through several techniques:

- Context compression: Reducing the size of information in the agent’s working memory while preserving what’s relevant to the current task

- Dynamic context injection: Loading the right information at the right time, rather than stuffing everything into the initial prompt

- Knowledge persistence: Storing decisions, progress, and state outside the context window (in files, databases, or structured logs) so the agent can recover across sessions

- Priority scoring: Ranking context elements by relevance so the most important information survives compression

In practice, a harness engineer might create a progress.txt file that the agent reads at the start of each session, summarizing what’s been accomplished and what’s next. Or they might build a system that dynamically loads relevant documentation based on the agent’s current task, rather than loading all documentation upfront.

Context engineering is often called the most important skill for AI developers in 2026. It directly determines whether an agent can maintain coherent behavior across extended tasks. For a deeper exploration, see our upcoming guide on context engineering fundamentals.

Pillar 2: Architectural Constraints

If context engineering is about giving the agent the right information, architectural constraints are about preventing the agent from doing the wrong things.

Production AI agents need boundaries. Without constraints, an agent tasked with refactoring code might rewrite the entire codebase. An agent managing infrastructure might delete resources it shouldn’t touch. An agent generating content might use a tone that doesn’t match the brand.

Architectural constraints include:

- Tool access controls: Defining which tools an agent can use, which files it can modify, and which operations require human approval

- Structural enforcement: Using linters, type checkers, and CI/CD pipelines to automatically verify that agent output meets quality standards

- Scope boundaries: Limiting what the agent can change in a single task to prevent cascading modifications

- Safety guardrails: Filtering agent outputs for security vulnerabilities, harmful content, or policy violations before they reach production

OpenAI’s harness engineering approach uses both AI agents and deterministic linters to enforce architecture. The deterministic checks catch structural violations immediately. The AI agents catch subtler issues like inconsistent naming conventions or documentation drift.

The key insight is that constraints don’t reduce agent capability. They increase it. An agent operating within well-defined boundaries can act with more autonomy because the harness catches mistakes. Without constraints, you need constant human oversight, which defeats the purpose of using agents.

Pillar 3: Entropy Management

Over time, AI-generated code and content accumulates inconsistencies. Variable naming drifts. Documentation falls out of sync with implementation. Dead code accumulates. Test coverage drops. This degradation is entropy.

In traditional software development, humans notice and correct entropy naturally during code reviews, refactoring sessions, and knowledge sharing. In agent-driven development, entropy accelerates because agents produce code faster than humans can review it.

Entropy management techniques include:

- Periodic audits: Scheduled agent tasks that scan for inconsistencies, dead code, and documentation drift

- Automated cleanup: Agents dedicated to refactoring, test maintenance, and documentation updates

- Regression detection: Systems that flag when agent behavior degrades over time

- Model drift monitoring: Detecting when a model’s output quality changes due to provider updates or context degradation

Martin Fowler’s analysis highlights that harness engineering creates two future worlds: pre-AI applications that need retrofitting, and post-AI applications designed from the start for agent maintenance. Understanding entropy management is essential for both.

How Harness Engineering Differs from Related Disciplines

If you’re coming from a related field, here’s how harness engineering fits into the landscape:

| Discipline | Focus | Relationship to Harness Engineering |

|---|---|---|

| Prompt engineering | Crafting effective inputs to language models | A component of context engineering (Pillar 1) |

| ML engineering | Training, fine-tuning, and deploying models | Separate discipline; harness engineering assumes the model exists |

| Agent engineering | Building agent logic, tools, and workflows | Complementary; agent engineers build the agent, harness engineers build the system around it |

| DevOps / Platform engineering | Infrastructure, CI/CD, deployment | Overlapping skills; harness engineering applies similar principles to AI-specific challenges |

| Context engineering | Managing information flow to AI systems | A core pillar of harness engineering, not the whole discipline |

The simplest distinction: prompt engineering optimizes a single interaction. Agent engineering optimizes an agent’s logic. Harness engineering optimizes the entire system that makes the agent reliable.

Getting Started: What to Learn First

If you’re interested in harness engineering, here’s a practical learning path organized by skill level.

Foundation (Weeks 1-4)

-

Understand how LLM APIs work. Build a simple application using the OpenAI or Anthropic API directly. No frameworks. This gives you the baseline understanding of prompts, completions, and tool use.

-

Learn context window management. Experiment with long conversations. Watch how the model’s behavior changes as the context fills up. Try summarizing earlier context to free up space. This is context engineering in its simplest form.

-

Build a simple agent with tool use. Create an agent that can call 2-3 tools (file reading, web search, calculation). Observe where it fails. These failure modes are what harness engineering solves.

Intermediate (Months 2-3)

-

Study production agent architectures. Read Anthropic’s guide on effective harnesses for long-running agents. Study how they structure initializer agents, progress files, and session bridging.

-

Build a multi-session agent. Create an agent that works on a task across multiple context windows. Implement progress persistence and state recovery. This is where harness engineering becomes essential.

-

Implement guardrails. Add constraints to your agent: tool access controls, output validation, and scope limits. Measure how constraints affect both reliability and autonomy.

Advanced (Months 4-6)

-

Build a complete harness. Design the full infrastructure for a production agent: context management, tool orchestration, state persistence, error recovery, monitoring, and evaluation.

-

Study evaluation frameworks. Learn to measure agent reliability systematically. Build eval datasets. Run regression tests. This is where entropy management becomes critical.

-

Contribute to open-source harness projects. Apply your skills to frameworks like LangChain, Claude Agent SDK, or CrewAI. Understanding multiple approaches deepens your expertise. For tool comparisons, see our framework comparison guide on agent-harness.ai.

FAQ

Do I need a machine learning background to learn harness engineering?

No. Harness engineering is closer to systems engineering and DevOps than to ML research. You need to understand how LLM APIs work, but you don’t need to understand transformer architecture or training procedures. Strong software engineering fundamentals matter more than ML expertise.

Is harness engineering a real job title?

It’s emerging. As of early 2026, you’ll find it in forward-thinking AI companies and teams using AI agents heavily. The skills are in demand even where the title hasn’t been formalized. Look for roles described as “AI infrastructure engineer,” “agent platform engineer,” or “AI systems engineer.” For career details, see our upcoming harness engineer career guide.

What’s the difference between harness engineering and MLOps?

MLOps focuses on the lifecycle of machine learning models: training, deployment, monitoring, retraining. Harness engineering focuses on the system around deployed models that makes agent behavior reliable. There’s overlap in monitoring and infrastructure, but the core concerns differ. MLOps asks “is the model performing well?” Harness engineering asks “is the agent producing reliable results in context?”

How long does it take to build a production-ready harness?

For a single-agent system with defined scope: 2-4 weeks. For a multi-agent system with complex orchestration, state management, and evaluation: 2-6 months. The investment pays off through reduced debugging time and increased agent reliability.

Which programming language should I learn for harness engineering?

Python dominates the AI agent ecosystem. Most frameworks, SDKs, and tools have Python as their primary language. TypeScript is the second most common, especially for web-based agent systems. Learn Python first, add TypeScript when needed.

Your Next Step

Harness engineering is new enough that getting started now puts you ahead of most engineers. The demand is growing faster than the supply of practitioners.

This week: Build a simple agent using a raw LLM API. Watch where it fails. Those failures are the problems harness engineering solves.

This month: Read the foundational resources: OpenAI’s harness engineering post and Anthropic’s effective harnesses guide. These two documents define the current state of the discipline.

This quarter: Follow our academy content as we publish deep dives into each pillar: context engineering, architectural constraints, entropy management, and the practical skills that connect them.

If you want to stay current as the discipline evolves, subscribe to the academy newsletter for tutorials, career guides, and learning paths delivered to your inbox.